Refact.ai Updates for April 2024

In the April edition of Refact.ai updates:

Changes in Models

- Starcoder2/3b is now available for all Refact.ai users for code completion!

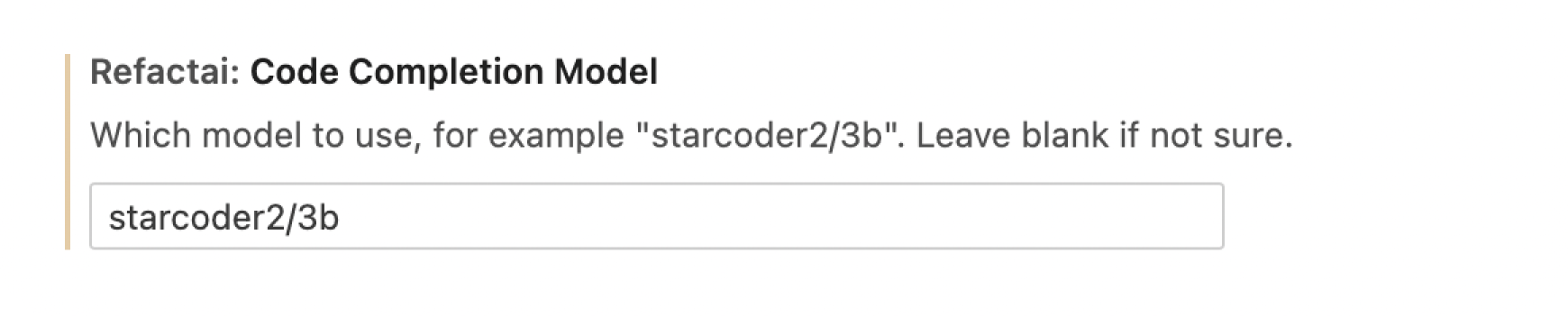

To use this model, go to the plugin settings and type

starcoder2/3bin the Code Completion Model field.

- New models: Claude-3 for Refact.ai Enterprise and Self-Hosted. To use it, you need to indicate your personal API key in the 3rd party settings.

Enterprise and Self-hosted Updates

Docker v1.6.0 and v1.6.1:

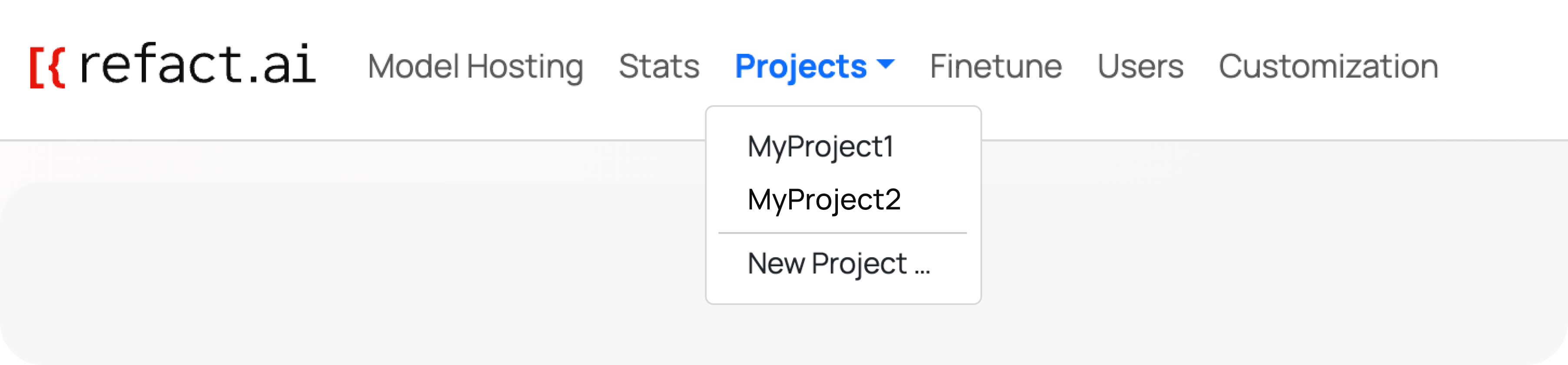

- Now you can create Multiple Source Projects in Refact.ai:

- Each of your projects can now use its diverse sources

- You can now fine-tune on demand on your specific project

- Multi-GPU Support

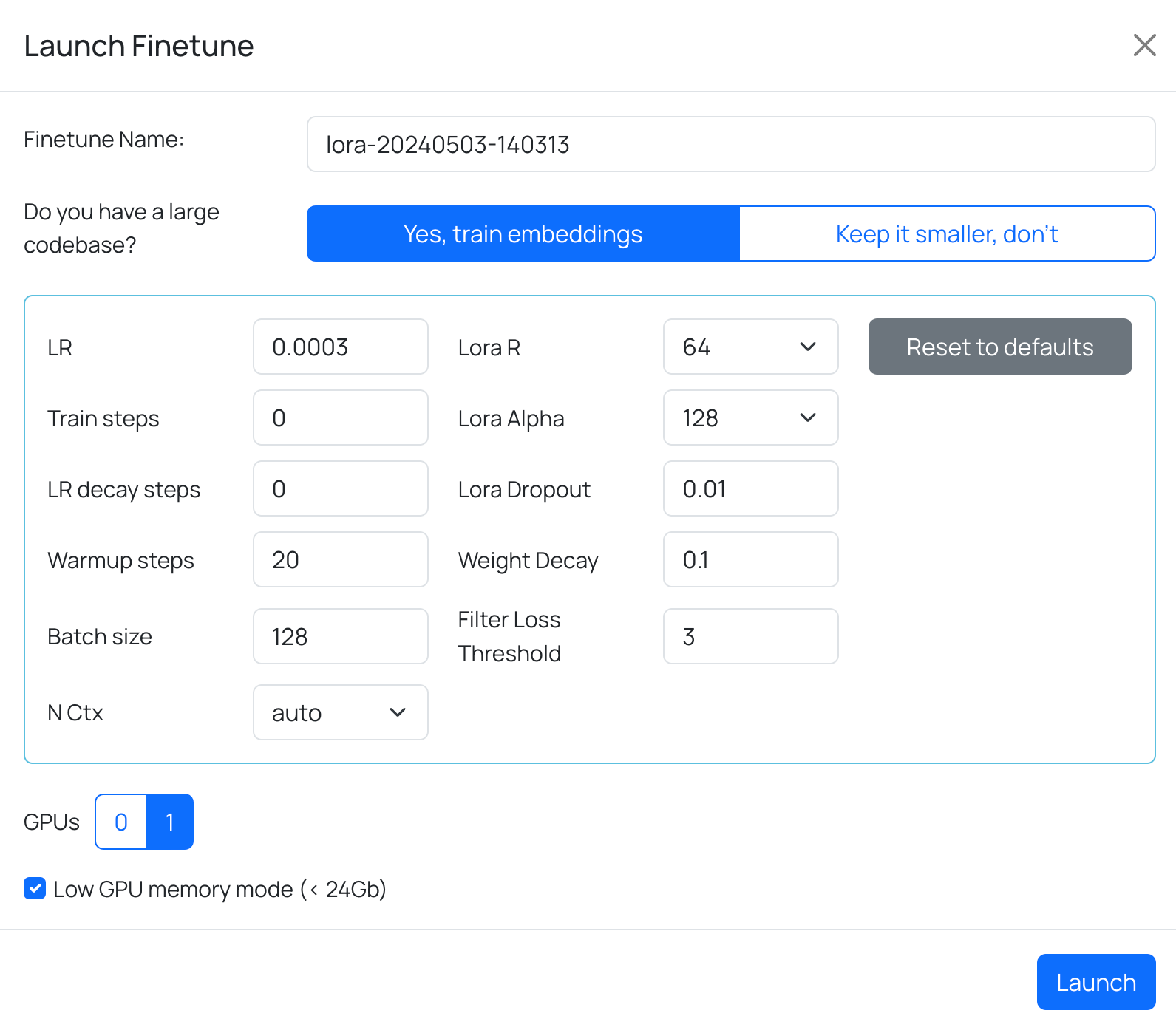

- Unified Fine-Tuning Process: We’ve simplified the fine-tuning process by merging the filter and fine-tuning steps into one process

- Simultaneous Fine-Tuning: Execute multiple fine-tuning processes at the same time

- LoRa Merge Support: Load model patched with LoRA weights (note: requires substantial RAM

- Context Size Switching Mechanism: You can change the max context value for models depending on your needs — small context for less memory usage and faster operation or large context for deeper insights

- 3rd Party LLMs Context Increase to 16.000

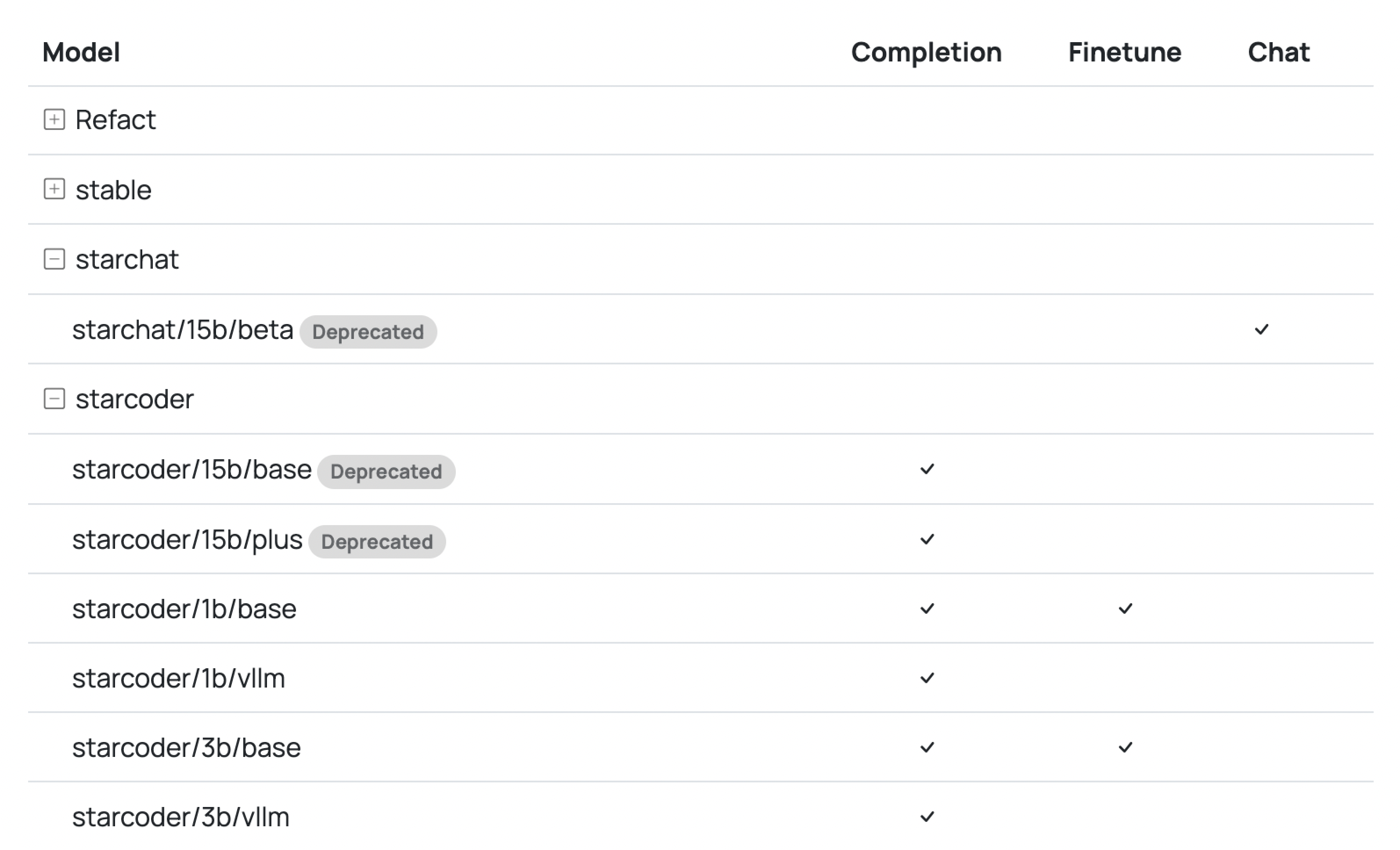

- Model Deprecation: Our UI updates now flag models slated for removal. This ensures you’re always working with the latest and most efficient models.

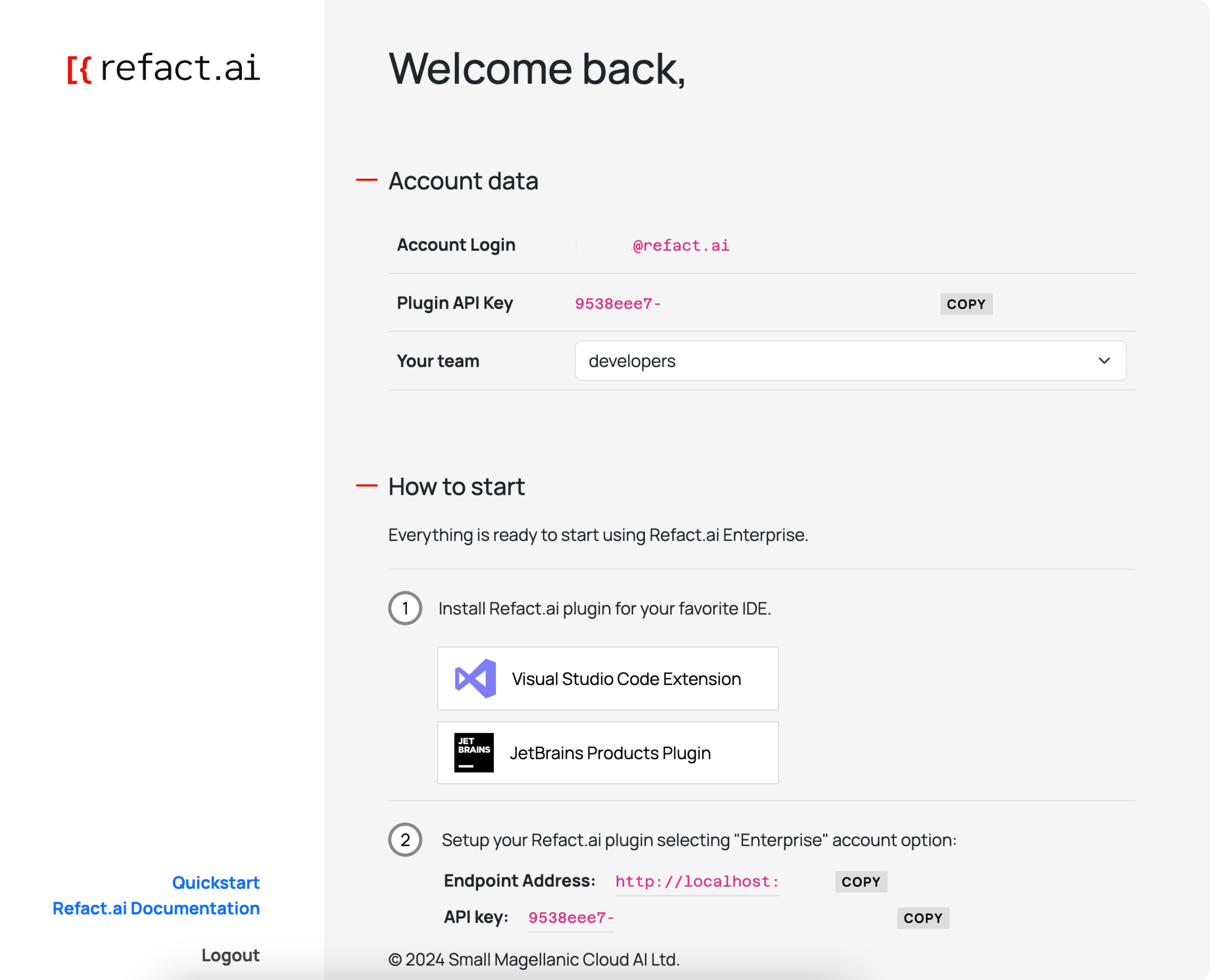

For Enterprise Users we have:

- Customization Tab: Personalize system prompts and toolbox commands for your team

- Easier User Access Control: We’ve integrated Keycloak for secure and convenient user access management. Check out the documentation for more information. Later, we’ll add more access options for teams, e.g. through Google or GitHub.

Plugins Updates

- Manual Choice of Completion Model in Plugin Settings (VS Code, JetBrains coming soon)

- Better FIM Debug Page: It’s now clearer and more user-friendly, helping you understand the processes behind AI models (available in VS Code only)

- Usability Improvements and Bug Fixes

Don’t forget to update your plugins! Old ones will work, but with limited features.

Latest versions are: JetBrains - 1.2.22, VS Code - 3.1.112.

Refact.ai Blog Navigation

Now, you can explore articles by categories and use the new search feature to find specific topics.

RAG Pre-release: Project Awareness for Contextual Code Completions and Chat — Try Now!

We’ve launched a pre-release of RAG (Retrieval-Augmented Generation) at Refact.ai! We understand how important it is for an AI assistant to be accurate, and RAG improves this by using your project’s data to make AI suggestions more relevant.

Here’s how it works:

- RAG analyzes your project’s data, including the file structure (AST), and uses a vector-based search to provide better AI completions when you’re writing code.

- In chat, you can now attach any file with @file, and use your project as context with @definition, @references, or @workspace commands.

All the things behind RAG are completely open source and Rust-based.

RAG is available for all Refact.ai users! It works x2 better for individual users in the Pro version — with a 4096 context size, it can delve deeper into your project for more accurate output.

RAG is currently in pre-release, but you can enable it by following the simple two-step instructions in our Discord, #rag channel. Go try it now, it’s really great!

We hope you liked the April updates of Refact.ai AI coding assistant! Our team is making it the secure copilot alternative with advanced customisation to help developers boost productivity with AI!

- Join our Discord to discuss new functions or share feedback

- Check Refact.ai GitHub for our source code — Refact.ai is an open-source AI coding assistant and welcomes your contributions!

- Please rate us on VS Code and JetBrains

- And stay (fine-)tuned for further updates!